Today’s post is by poet and filmmaker Dave Malone, AI-assisted by Claude AI (editing and drafting assistance) and edited by PGB (a human editor).

I was curious to see if Claude AI could write poetry as good as my own. In September, I ran an experiment on TikTok. I shared two poems titled “Autumn Witness”—one written by me, one by Claude AI after I shared my work and asked him to write in my voice and style. I asked my followers to guess which poem was mine.

Seventy-six percent got it wrong. They chose Claude’s poem.

The comments revealed something that could be unsettling to some. “I resonated with #1 more, more positive, more spiritual,” wrote one follower about Claude’s poem.

Another declared with total confidence: “#1 is you and better, far better.” Some of my followers proclaimed the opposite assurance: “#1 is Claude using common phrases. #2 matching helicopter leaves and dervishes is a human mind connecting disparate similarities.” And others revealed the difficult truth: “I am annoyed that this is as hard as it is.”

A week later, I did a similar experiment at Missouri State-West Plains’ Ozarks Symposium. Fifty-nine percent thought Claude wrote my poem.

These experiments were hatched out of simple curiosity but led me to consider more complex issues. If AI can create art as compelling as humans, then surely audiences deserve transparency. As well, artists need to be transparent about their AI use.

I’m a poet and screenwriter from the Missouri Ozarks, and I’ve been AI-curious since the beginning. For me, Claude started out as a research assistant, and I called him “an amped-up Google machine.” Things have changed. Using Claude almost daily in my writing practice, he has become a valued sounding board as well as an editor for my poetry, screenplays, film projects, book submissions, and this article you are reading now. I have moved from AI-curious to AI-positive, and I know transparency matters.

The creative industry doesn’t have agreed-upon standards for transparency when it comes to AI use. Artists don’t know how to disclose usage, and subsequently audiences don’t know if they’re getting AI or human work. Some creators are upfront about their AI use while others hide it. Many are somewhere in the murky middle.

Right now, without clear standards, artists don’t know what’s expected of them, and audiences don’t know what they’re getting. Given this problem, I created a solution.

Two-category solution

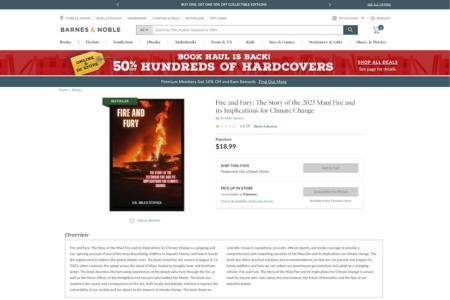

The AACC (AI Attribution and Creative Content) is an open-source transparency framework with two simple categories.

AI-Assisted means the creator originated and drove the work, with AI contributing along the way.

AI-Generated means AI created the primary content from the creator’s prompts and direction, and the creator’s role was conceptual and editorial.

That’s it. Two categories that cover the spectrum of how artists work with AI.

![Graphic created by the author titled AI ATTRIBUTION AND CREATIVE CONTENT (AACC): A transparency framework for creators. (This framework is for educational and discussion purposes, not legal purposes.) Box 1 contains the following: AI-ASSISTED • The creator originates and drives the work • Al contributes through generation, modification, or enhancement at any stage • The creator makes all major creative decisions and is responsible for the final work Attribution: By [Creator Name], Al-assisted by [Al Name] Examples Writing: "Mercury." By Sarah Chen, Al-assisted by Apertus (word choice and line break suggestions) generanus Dir. by Chris Taylor, Al-assisted by Runway ML (visual effects and scene Music: "Mars." By Luna Rivers, Al-assisted by Wondera (harmonic progressions and arrangement) Visual Art: Jupiter. By Jamila Banks, Al-assisted by Midjourney (background textures and composition elements) Box 2 contains the following: AI-GENERATED - Al creates the primary content from the creators prompts and direction - The creator's role is conceptual, curatorial, and editorial Attribution: Al-generated by [Al Name], concept by [Creator Name] Examples Writing: "Saturn." Al-generated by Apertus, concept by Jordan Lee (Lee provided plot outline and characters; Al wrote 5,000-word short story draft; Lee edited for voice and pacing) Film: Uranus. Al-generated by Runway ML, concept by Sam Rivera (Rivera provided scene descriptions; Al generated video sequences; Rivera edited and color graded) Music: "Neptune." Al-generated by Wondera, concept by Sofia Razo (Razo specified genre and mood; Al composed and produced track; Razo added transitions and mastered) Visual Art: Pluto Demoted. Al-generated by Midjourney, concept by Kai Thompson (Thompson crafted prompts; Al generated imagery; Thompson selected from 50+ variations and refined details) Note: In the US, Al-generated content cannot be copyrighted. Credit: AACC 1.1 by Dave Malone. Creative Commons (CC BY) [github.com/dzmalone/aacc](http://github.com/dzmalone/aacc)(http://davemalone.net/aacc)](https://janefriedman.com/wp-content/uploads/2026/01/AACC.png)

AI-Assisted

- The creator originates and drives the work

- AI contributes through generation, modification, or enhancement at any stage

- The creator makes all major creative decisions and is responsible for the final work

Authors are already working this way, even without formal labels. In a recent BookBub survey of authors (69% self-published; 6% traditionally; 25% both self-pubbed and traditionally), some writers described using AI as an editor for “research, grammar edits and sometimes rephrasing,” or as a developmental editor to “talk about plot, toy with character profiles, work through structural templates.”

One explained using AI for “drafting passages and scene descriptions; rewriting passages.” The author emphasized their use wasn’t “blind” generation but material that was “reviewed/accepted/rejected to stay within my voice and vision.”

AI-Generated

- AI creates the primary content from the creator’s prompts and direction

- The creator’s role is conceptual, curatorial, and editorial

Another surveyed author explained: “I see it like an assistant or even a ghostwriter at times … I come up with the idea and play with AI to make it better. Then AI purges a first draft and I take over as the author from there.”

These two AACC categories cover the full spectrum, from using AI to polish a sentence to having it generate an entire first draft. When this type of consistent labeling is missing, problems emerge.

Why this framework is needed: examples from mainstream artists

AI-assisted novel: Tokyo-to Dojo-to by Rie Kudan

In 2024, author Rie Kudan admitted (after the fact) that 5% of her novel was “lifted verbatim from ChatGPT.” Would transparent labeling from the start have changed the conversation around her award-winning work?

AI-assisted film: The Irishman

In this Martin Scorsese 2019 film, editors used AI deepfake software FaceSwap to make the movie’s leads, Robert De Niro, Al Pacino and Joe Pesci look younger. Film audiences largely accept AI-assisted visual effects, but I argue that transparent disclosure should still be the standard.

AI-assisted or AI-generated music: Telisha “Nikki” Jones

Recently, Telisha “Nikki” Jones as Xania Monet snagged a three-million-dollar record deal for her music created with AI. She writes the lyrics, but Suno’s AI does the vocals. Is her music AI-assisted or AI-generated?

AI-generated music: The Velvet Sundown

The Velvet Sundown streamed music on Spotify without at first acknowledging how they created their music. They later updated their Spotify profile to remark that their music project was “composed, voiced, and visualized with the support of artificial intelligence.” Those keywords point to AI-generated work.

AI-generated novel: Death of an Author by Stephen Marche

In an interview with Joanna Penn, Stephen Marche was transparent about his use of AI accounting for 95% of the novel, Death of an Author. Though Marche described his role as “curatorial” and himself as the work’s “creator,” the substantial AI use classifies this as AI-generated.

Without a consistent framework, every artist handles AI disclosure differently, if they disclose at all. Audiences often can’t tell what role AI played in the work. Artists don’t know what’s expected of them. That’s the problem this framework solves.

Moving forward

Admittedly, the AACC framework won’t solve every problem with AI in creative work. Like many artists, I have ethical concerns about how most AI models were trained. Major companies (with exceptions like Apertus) used copyrighted works without artist consent or compensation. Their data centers carry environmental costs. These are real issues.

But the hard truth: just as illegal file-sharing through Napster eventually evolved into licensed platforms like Spotify and Pandora, the current wave of lawsuits against AI companies will likely establish proper licensing and payment systems. That’s the long game. In the meantime, artists have an immediate choice: use AI transparently or leave audiences guessing.

For young writers and artists, I echo musician Nick Cave’s warning about leaning too heavily on AI-generation in your art. Don’t sidestep “the inconvenience of the artistic struggle” by going straight to the easy commodity. That struggle is where you develop your voice and your art. There are no shortcuts. AI is a tool, and like any tool, it reveals the skill of the person using it. An inexperienced curator produces inexperienced work.

For those of us using AI as a legitimate tool, transparency shouldn’t be complicated. Label your work. Help audiences understand your process. Use the framework or create your own, but be honest about how you’re working.

The AACC framework is open-source and available at GitHub and at my website. Two simple categories with clear attribution. Whether you’re AI-curious, AI-positive, or still figuring it out, I encourage you to be transparent. It’s that simple.

Dave Malone is a poet and screenwriter from the Missouri Ozarks. His ninth poetry collection 53 is forthcoming this year from Bass Clef Books, and his short film Tennyson’s Maud is in post-production. His work has been featured on NPR and published in numerous literary journals, most recently Bellevue Literary Review. Recognizing the need for AI transparency in creative work, he developed the AACC framework. Website and newsletter: davemalone.net | TikTok: @poetmalone

Very informative. I think I am in the realm of AI-assisted in that I create biographies of the main characters. Then present it to Claude, asking if this is enough information to create an Enneagram. Claude comes back with follow-up questions, which I answer. Claude then chooses an Enneagram based on my biography and answers to the follow-up questions. Am I correct in thinking this is AI-assisted work with Claude?

Changing the subject, I have many wonderful memories of the Missouri Ozarks as my grandparents lived near Osage Beach, MO. Thanks for triggering some of those recollections.

Thanks, Keith. Yes, I would classify this type of contribution as AI-assisted. In your case, you are having some back-and-forth, and then ultimately you get to decide what to go with. If Claude chooses Enneagram 1, but you are thinking, well, my character is interested in more external validation and really should be an Enneagram 3, then you can make that executive decision. Refining character biographies are a great way to brainstorm with AI. Thanks for sharing that.

Nice. That’s a great part of the country up there!

Thanks, Dave, great column. I’m a self-published non-fiction author and don’t use AI to generate content at this point. However, I will use it later this year when I revise one of my books to create a list of terms for a glossary that I’ll write, as well as an index. I will also be sure to mention up front that I used AI to generate both. Otherwise, I have no plans to use it. But never say never–and hopefully labels like yours will come into widespread use. Cheers.

I appreciate that, Tim. Those sound like excellent tasks for AI (and ones that will save you a boatload of time!). Best of luck with your book revisions.

This is a refreshing look at this issue. I personally would prefer an initial category where AI tools were used for research and developmental editing and had no role in creating the actual language of the work.

I can’t imagine many authors acknowledging *any* AI role. The backlash in the writing community is quite severe. Look at the Nebula Awards, for example.

Thanks, Patrick. I think you raise a pertinent issue. I do think both would fall under AI-assisted. I’d say that writers using AI for “research” or “developmental editing” could simply add that in their attribution. For instance, AI-assisted by ChatGPT (research).

In some ways, I feel like research with AI is a given (especially given the DuckDuckGo, Google, and Microsoft tools). But in a world where we need transparency, for now, I think it’s wise to acknowledge even research.

You make good points. I’m aware of Stephen Marche and Joanna Penn being forthcoming about their AI use. And I hope we can all learn from what is happening with the Nebula Awards.

How very, very insightful and helpful this was for me. I have a book subtitle that I arrived at by running a sample past AI, then tweaking one of the ten offerings. Nothing substantive. Surprised myself, though.

I am so glad you found it helpful, Gordon!

The AI-Assisted and AI-Generated framework is useful, but I believe these two distinctions don’t go far enough. As a writer, I have little regard for AI-Generated work, though I know there’s a market for it. But for a novelist like me, the “AI-Assisted” designation is too limited. I wrote a blog post on my website (Brywig.com) labeled “Reflection, Not Conception” that puts the limits on AI assistance that I’ve stored as a foundational file in Chat GPT. Though it’s required some iterative training, Chat now helps me only with research and analysis of my work. I not only prevent it from writing my prose, but from also helping me to brainstorm such task as plotting, scene development, and the exploration of character motivation. This serves two critical functions for me: 1) It ensures work born from unique ideas and experience rendered in the language that can directly express those personal concepts and episodes and 2) It allows me to continue to polish my craft without outsourcing the work that would prevent me from realizing the full potential of my goal of creating novel explorations of the nature of family.

I appreciate your insights, Bryan. And I read your blog post; thanks for sharing that. I like how you are using Chat-Claude-Perplexity to your own ends and on your own terms. I appreciate that you enlist AI as a limited “co-developer” but your role is clear: “As creator, I’m responsible for the inspiration, ideation, and writing of my work.”

So re this framework, given you think the AI-designation is too limited, what do you think about the parenthetical where creatives can explain their usage more in depth? I guess I should first ask, how do you see the AI-assisted label as limited? What needs to be added? (Of note, I started with 4 labels and carved down to 2, so I am very curious.) Thanks!

Thanks for the opportunity to respond, Dave, and for starting the conversation about qualifying the use of AI that I think is critical to both the authors and readers navigating this tool while trying to protect the human spirit that beats at the heart of the most moving work. For me, the three divisions I’d like to see are AI-generated, AI-collaborated, and AI-assisted, where the later is strictly limited to the analysis and research guardrails I apply for development of my own work. Whatever the standards, our industry seems ripe for these criteria. I think they’re especially relevant for the literary agents who are gatekeepers for the Big Five. After sending out more than a hundred queries for my latest novel, I was flummoxed by this boilerplate language that appeared in many Query Manager intake forms: “Did you use AI for the creation of this manuscript OR its marketing materials.” Such language seems to infer a blanket rejection of even the most discretionary use of this tool in applications that would never be reflected on the pages a reader holds in their hands.

Hey Bryan. I’d like to say again, I really respect how you’re approaching AI and your work (relegating AI to analysis and co-development, not creation or ideation). Those personal guardrails you’ve implemented are impressive and inspiring.

I hear you. I wish we could have a 3-part system like you suggest. I just don’t think we can. But I’d love to be proven wrong.

To me, the issue is how the mainstream platforms (from writing and art to business) have already categorized AI attribution for some time, as you probably know. There a lot of labels to keep track of. In addition to AI-collaborated, there are AI-enhanced, AI-enabled, AI-driven, and AI-augmented.

Originally, for this framework, I had an enhanced label and two different types of AI-generation. Too much.

With the plethora of labels, the two that seemed to have the most understandable definitions across platforms were AI-assisted and AI-generated. So my thought was to include the parenthetical note in the attribution framework, so that folks can be more exact about their processes.

Ideally, this discussion would have happened five or more years ago, and 3 or even 4 attribution labels would have been defined. I believe that if Maslow’s Hierarchy can have 5 understandable and easily-remembered levels, then we could have had 4 understandable and memorable levels in AI attribution, but I fear that ship has sailed.

And yes! Again an excellent point about the Big 5 publishers and literary agents who simply don’t understand that writers can use AI in meaningful ways.

I think from your list, enhanced and augmented are synonims. Maybe enabled and driven too, because I cannot see the difference.

Hi, Marina. It’s hard to decipher, right? This article helped me (I think, lol) to get a handle on Ai-enhanced and AI-enabled.

https://www.ascendstl.com/press/ai-enabled-and-ai-first-whats-the-difference

I don’t think this categorization is termed or coined by you Dave, am I right? I work with authors and I know Amazon, for instance, asks authors to declare if their work is AI generated. Please do clarify

Hi, Priya. The two category names I chose were not coined by me. I noticed across many platforms, there were many labels used, and I landed on two that I felt could be most understood and most easily wielded. You can see my above response to Bryan for a little more on that. Thanks for asking!

One challenge I see is that it assumes only one AI assisted platform – where you might want to use one platform for something and a different platform for something else. This is going to become more common as we get more and more specialized AI platforms.

Hi, Rebecca. Good call. Those parentheticals could get a bit long and cumbersome! I foresee a version 1.2 in the mix, lol.

What about 100% human-written works? Like the book “Helm” by Sarah Hall who has this specifically denoted as “human written” on the cover. Having read it, the work impresses me as a uniquely human-written work. I write on a mechanical typewriter, it’s slow, cumbersome to edit, but feel it’s worth it to make something 100% human. Is there any value in this at all anymore and will readers even care in the not-so-distant future?

Hi Jack: I believe there will always be artisans. Mass-produced products exist alongside handcrafted things. The potter and the woodworker continue their craft despite the existence of IKEA.

For those using AI, I’d really like to hear your thoughts about Bryan’s suggestion for a third label that would designate when AI is only used for research and analysis.

Yes, this is what I use AI for, and namely it is the Google incorporated summary which I use in research, given that it translates and summarizes information from several sources in several languages.

It means, everybody who uses Google search, uses AI for research?

Thanks for sharing this. Hmmm… I don’t know enough about that feature to weigh in completely, but I don’t think you can say that everyone who uses Google search is then using AI…

I’m another person who uses AI. For me personally, your AI Generated vs AI Assisted labelling is sufficient, along with a requirement to provide a sentence or two about the details for the AI Assisted category. People who care about those details can always read the label more carefully. For me, how much I use AI varies in each piece of writing, so more labels would just mean more complexity.

Thanks for weighing in, Elena. I appreciate this feedback. I certainly agree that more labels means more complexity and confusion. I had 4 categories, then 2, now I’m back to 3 so that I can include Human Authored, as that is an important designation to include given the recent Authors Guild certification. If interested, here’s the updated graphic & info at Github.

Here is my question on using AI. If you submit your creative work to AI for development or editorial analysis, are you also giving AI the access to your work for future use as in training and generative work? I really can’t understand how it could protect your work from any future compensation from AI companies against unlicensed use if you use their AI to assist you in creating the work in the first place.

Hi Arlene: For AI tools you pay to use, you can turn off training. Most enterprise systems (those used for business) do not train on work that’s accessed or uploaded.

Thanks for that answer. Then the only two questions are: do you want to pay for that and do you trust the AI companies to honor that promise not to train on your work? I guess I find it hard to trust them. They have already trained on a lot of copyrighted work without permission or compensation. That is why I won’t pay to use them. There is no way I could know what they are doing secretly.

The list of businesses using AI is very, very long at this point and includes major publishers such as Penguin Random House, Harpercollins, Pearson, etc.

Everyone has their own risk tolerance, but any of these AI companies would go out of business rapidly if major corporations thought their proprietary information were being purposely compromised. That said, even if these models did train on my work, it doesn’t make a difference to me personally or professionally. I don’t think I’m losing anything. Others may disagree on principle.

Great question, and thanks for answering this, Jane. For those who use Claude, you can turn off the training via the Privacy Settings and toggle the training switch, which is defaulted to training.